Product Design

UX for Data Management

Reimagining complex data experiences with clearer structure and usability.

Year :

2018

Industry :

Data Management / Multi-platform storage

Client :

Strongbox Data

Project Duration :

1

Problem Statement

As the platform scaled, the volume and complexity of data outgrew the existing workflows and visual structure. Interfaces designed for smaller datasets became cognitively heavy, inefficient, and inconsistent under increased load.

Users were required to interpret dense information and take critical actions without clear hierarchy or optimized interaction patterns — reducing clarity and slowing decision-making.

Simultaneously, new features were being introduced without a unified framework to govern data presentation and workflow design. Without restructuring how complex data was managed and visualized, the product risked compounding complexity and eroding usability as it grew.

The challenge was to redesign high-density data experiences for clarity and efficiency while establishing scalable standards to support ongoing expansion.

Approach

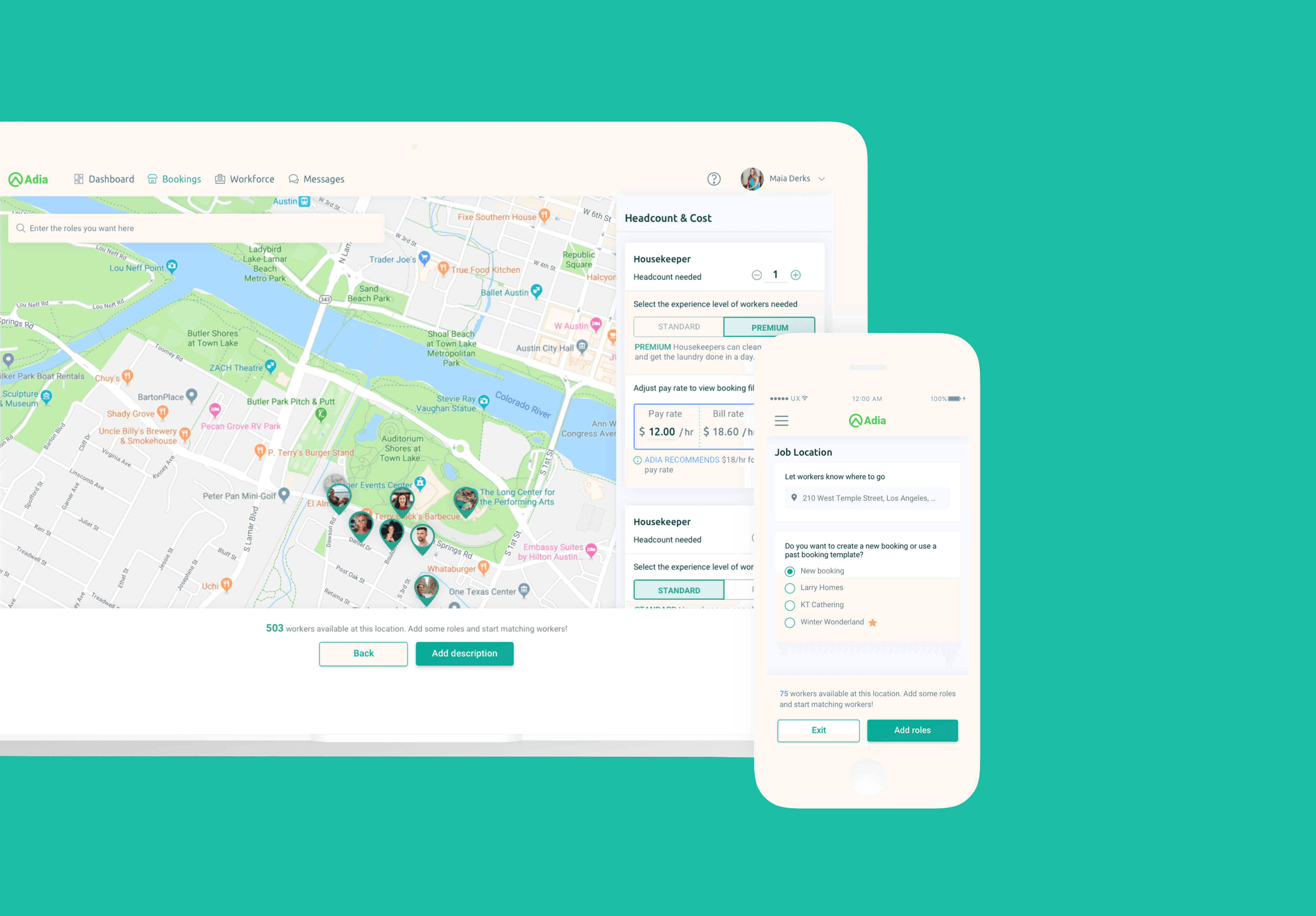

Designing for a data-intensive enterprise platform required reducing cognitive load without reducing capability. The goal was to make complex data operations predictable, transparent, and efficient at scale.

Structural Audit

I conducted a full audit of workflows and UI patterns to identify duplication, inconsistent behaviors, and friction in high-volume data interactions.

Standard users needed to:

Manage metadata and categories at scale

Switch between list and thumbnail views

Preview content without breaking workflow

Execute multi-step actions across servers

Maintain clarity during long-running processes

The key issue wasn’t functionality — it was how complexity surfaced in the interface.

Interaction Modeling

Working closely with Product and engineering, I grounded solutions in familiar file-management mental models while adapting them for enterprise-scale datasets.

Exploration prioritized:

Predictable behavior

Clear system feedback (progress, errors, confirmations)

Reduced visual noise

Alignment with brand and technical constraints

Early validation ensured feasibility before advancing to high-fidelity design.

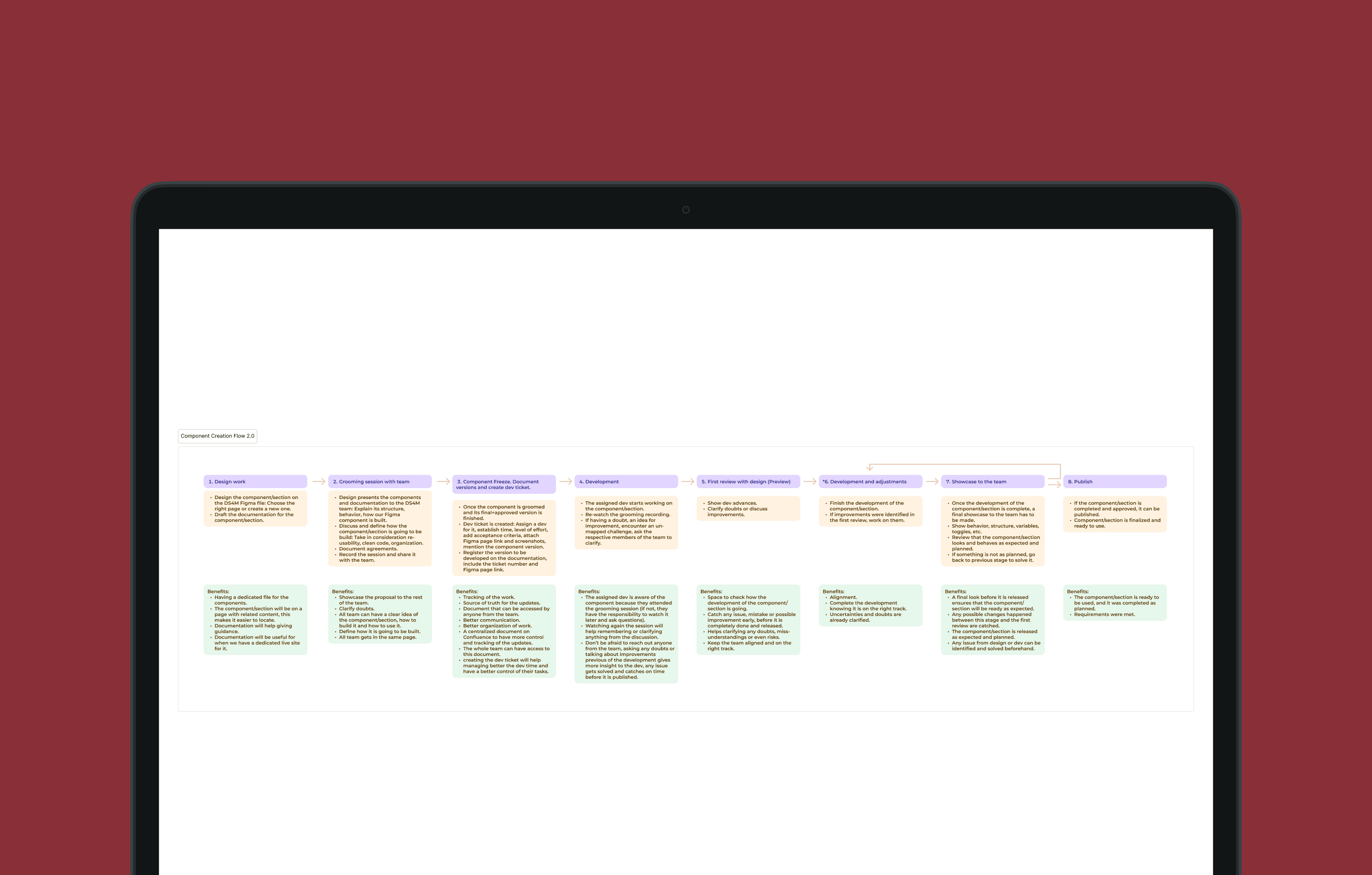

System-Level Redesign

Rather than iterating screen by screen, I restructured the design foundation:

Standardized core components and interaction states

Simplified data tables and dashboards

Introduced stronger visual hierarchy and iconography

Implemented breadcrumb navigation for deep hierarchies

Defined consistent feedback patterns for long-running operations

Established responsive guidelines to prevent layout breakdown

Prototypes and scenario-based flows validated complex interactions before implementation.

Outcome & Impact

The redesign transformed a cognitively heavy data environment into a clearer, more structured experience built for scale.

Key outcomes included:

Simplified execution of high-volume data tasks

Reduced visual fragmentation through standardized patterns

Clear, consistent feedback during long-running or multi-step operations

Stronger alignment with familiar file-management mental models

Reinforced and restructured design system foundation

For users, this meant greater confidence and efficiency when managing complex datasets.

For the product, it established a scalable framework capable of supporting growing data volumes and new features without reintroducing fragmentation.

By addressing workflows, visualization, and system governance together, the platform shifted from reactive complexity to controlled scalability — maintaining capability while significantly improving clarity and trust.

Lessons Learned

1. Research must reflect operational reality.

In data-intensive environments, understanding real workflows is critical. Observing how users categorize, preview, and move large datasets revealed friction that wasn’t visible at the surface level. Strong design decisions came from grounding solutions in actual behavior — not assumptions.

2. Capability should not surface as complexity.

Enterprise products often accumulate powerful features that unintentionally overwhelm users. The challenge is not reducing functionality, but structuring it so complexity is absorbed by the system — not exposed in the interface.

3. Scalability starts with pattern discipline.

Without consistent interaction models and feedback patterns, high-volume data workflows quickly fragment. Establishing reusable behaviors and predictable states prevented future design drift as the product evolved.

4. Performance feedback builds trust.

In environments involving large datasets and cross-server actions, clear progress indicators, confirmations, and error states are not cosmetic — they are essential to user confidence. Transparent system feedback directly impacts perceived reliability.

5. Collaboration reduces systemic risk.

Continuous alignment with product, engineering, and stakeholders minimized rework and ensured that scalability, responsiveness, and feasibility were addressed early — not retrofitted later.

Designing for complex data systems reinforced a core principle: clarity is a strategic decision. When workflows, visualization, and system foundations are aligned, even high-density enterprise tools can feel controlled, efficient, and trustworthy.

More Projects

Product Design

UX for Data Management

Reimagining complex data experiences with clearer structure and usability.

Year :

2018

Industry :

Data Management / Multi-platform storage

Client :

Strongbox Data

Project Duration :

1

Problem Statement

As the platform scaled, the volume and complexity of data outgrew the existing workflows and visual structure. Interfaces designed for smaller datasets became cognitively heavy, inefficient, and inconsistent under increased load.

Users were required to interpret dense information and take critical actions without clear hierarchy or optimized interaction patterns — reducing clarity and slowing decision-making.

Simultaneously, new features were being introduced without a unified framework to govern data presentation and workflow design. Without restructuring how complex data was managed and visualized, the product risked compounding complexity and eroding usability as it grew.

The challenge was to redesign high-density data experiences for clarity and efficiency while establishing scalable standards to support ongoing expansion.

Approach

Designing for a data-intensive enterprise platform required reducing cognitive load without reducing capability. The goal was to make complex data operations predictable, transparent, and efficient at scale.

Structural Audit

I conducted a full audit of workflows and UI patterns to identify duplication, inconsistent behaviors, and friction in high-volume data interactions.

Standard users needed to:

Manage metadata and categories at scale

Switch between list and thumbnail views

Preview content without breaking workflow

Execute multi-step actions across servers

Maintain clarity during long-running processes

The key issue wasn’t functionality — it was how complexity surfaced in the interface.

Interaction Modeling

Working closely with Product and engineering, I grounded solutions in familiar file-management mental models while adapting them for enterprise-scale datasets.

Exploration prioritized:

Predictable behavior

Clear system feedback (progress, errors, confirmations)

Reduced visual noise

Alignment with brand and technical constraints

Early validation ensured feasibility before advancing to high-fidelity design.

System-Level Redesign

Rather than iterating screen by screen, I restructured the design foundation:

Standardized core components and interaction states

Simplified data tables and dashboards

Introduced stronger visual hierarchy and iconography

Implemented breadcrumb navigation for deep hierarchies

Defined consistent feedback patterns for long-running operations

Established responsive guidelines to prevent layout breakdown

Prototypes and scenario-based flows validated complex interactions before implementation.

Outcome & Impact

The redesign transformed a cognitively heavy data environment into a clearer, more structured experience built for scale.

Key outcomes included:

Simplified execution of high-volume data tasks

Reduced visual fragmentation through standardized patterns

Clear, consistent feedback during long-running or multi-step operations

Stronger alignment with familiar file-management mental models

Reinforced and restructured design system foundation

For users, this meant greater confidence and efficiency when managing complex datasets.

For the product, it established a scalable framework capable of supporting growing data volumes and new features without reintroducing fragmentation.

By addressing workflows, visualization, and system governance together, the platform shifted from reactive complexity to controlled scalability — maintaining capability while significantly improving clarity and trust.

Lessons Learned

1. Research must reflect operational reality.

In data-intensive environments, understanding real workflows is critical. Observing how users categorize, preview, and move large datasets revealed friction that wasn’t visible at the surface level. Strong design decisions came from grounding solutions in actual behavior — not assumptions.

2. Capability should not surface as complexity.

Enterprise products often accumulate powerful features that unintentionally overwhelm users. The challenge is not reducing functionality, but structuring it so complexity is absorbed by the system — not exposed in the interface.

3. Scalability starts with pattern discipline.

Without consistent interaction models and feedback patterns, high-volume data workflows quickly fragment. Establishing reusable behaviors and predictable states prevented future design drift as the product evolved.

4. Performance feedback builds trust.

In environments involving large datasets and cross-server actions, clear progress indicators, confirmations, and error states are not cosmetic — they are essential to user confidence. Transparent system feedback directly impacts perceived reliability.

5. Collaboration reduces systemic risk.

Continuous alignment with product, engineering, and stakeholders minimized rework and ensured that scalability, responsiveness, and feasibility were addressed early — not retrofitted later.

Designing for complex data systems reinforced a core principle: clarity is a strategic decision. When workflows, visualization, and system foundations are aligned, even high-density enterprise tools can feel controlled, efficient, and trustworthy.

More Projects

Product Design

UX for Data Management

Reimagining complex data experiences with clearer structure and usability.

Year :

2018

Industry :

Data Management / Multi-platform storage

Client :

Strongbox Data

Project Duration :

1

Problem Statement

As the platform scaled, the volume and complexity of data outgrew the existing workflows and visual structure. Interfaces designed for smaller datasets became cognitively heavy, inefficient, and inconsistent under increased load.

Users were required to interpret dense information and take critical actions without clear hierarchy or optimized interaction patterns — reducing clarity and slowing decision-making.

Simultaneously, new features were being introduced without a unified framework to govern data presentation and workflow design. Without restructuring how complex data was managed and visualized, the product risked compounding complexity and eroding usability as it grew.

The challenge was to redesign high-density data experiences for clarity and efficiency while establishing scalable standards to support ongoing expansion.

Approach

Designing for a data-intensive enterprise platform required reducing cognitive load without reducing capability. The goal was to make complex data operations predictable, transparent, and efficient at scale.

Structural Audit

I conducted a full audit of workflows and UI patterns to identify duplication, inconsistent behaviors, and friction in high-volume data interactions.

Standard users needed to:

Manage metadata and categories at scale

Switch between list and thumbnail views

Preview content without breaking workflow

Execute multi-step actions across servers

Maintain clarity during long-running processes

The key issue wasn’t functionality — it was how complexity surfaced in the interface.

Interaction Modeling

Working closely with Product and engineering, I grounded solutions in familiar file-management mental models while adapting them for enterprise-scale datasets.

Exploration prioritized:

Predictable behavior

Clear system feedback (progress, errors, confirmations)

Reduced visual noise

Alignment with brand and technical constraints

Early validation ensured feasibility before advancing to high-fidelity design.

System-Level Redesign

Rather than iterating screen by screen, I restructured the design foundation:

Standardized core components and interaction states

Simplified data tables and dashboards

Introduced stronger visual hierarchy and iconography

Implemented breadcrumb navigation for deep hierarchies

Defined consistent feedback patterns for long-running operations

Established responsive guidelines to prevent layout breakdown

Prototypes and scenario-based flows validated complex interactions before implementation.

Outcome & Impact

The redesign transformed a cognitively heavy data environment into a clearer, more structured experience built for scale.

Key outcomes included:

Simplified execution of high-volume data tasks

Reduced visual fragmentation through standardized patterns

Clear, consistent feedback during long-running or multi-step operations

Stronger alignment with familiar file-management mental models

Reinforced and restructured design system foundation

For users, this meant greater confidence and efficiency when managing complex datasets.

For the product, it established a scalable framework capable of supporting growing data volumes and new features without reintroducing fragmentation.

By addressing workflows, visualization, and system governance together, the platform shifted from reactive complexity to controlled scalability — maintaining capability while significantly improving clarity and trust.

Lessons Learned

1. Research must reflect operational reality.

In data-intensive environments, understanding real workflows is critical. Observing how users categorize, preview, and move large datasets revealed friction that wasn’t visible at the surface level. Strong design decisions came from grounding solutions in actual behavior — not assumptions.

2. Capability should not surface as complexity.

Enterprise products often accumulate powerful features that unintentionally overwhelm users. The challenge is not reducing functionality, but structuring it so complexity is absorbed by the system — not exposed in the interface.

3. Scalability starts with pattern discipline.

Without consistent interaction models and feedback patterns, high-volume data workflows quickly fragment. Establishing reusable behaviors and predictable states prevented future design drift as the product evolved.

4. Performance feedback builds trust.

In environments involving large datasets and cross-server actions, clear progress indicators, confirmations, and error states are not cosmetic — they are essential to user confidence. Transparent system feedback directly impacts perceived reliability.

5. Collaboration reduces systemic risk.

Continuous alignment with product, engineering, and stakeholders minimized rework and ensured that scalability, responsiveness, and feasibility were addressed early — not retrofitted later.

Designing for complex data systems reinforced a core principle: clarity is a strategic decision. When workflows, visualization, and system foundations are aligned, even high-density enterprise tools can feel controlled, efficient, and trustworthy.